Einops

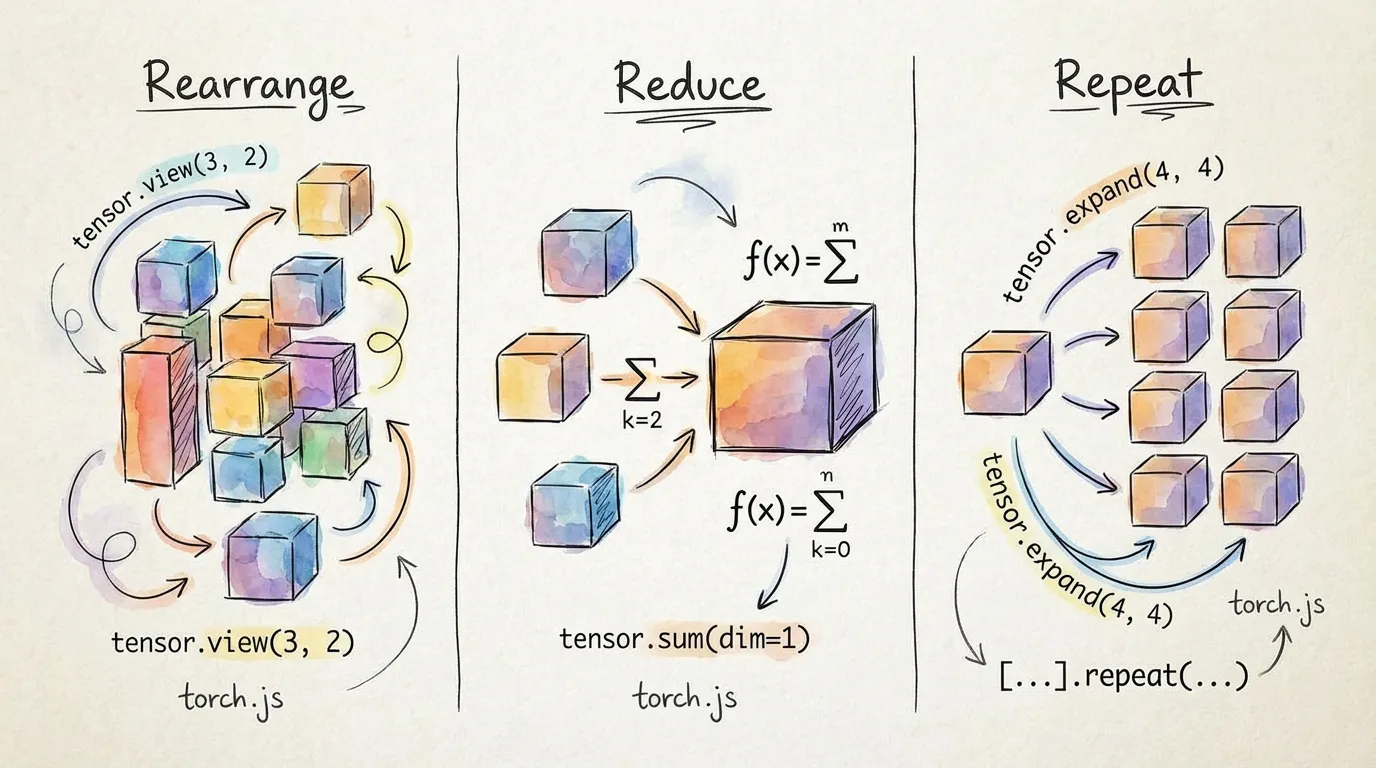

Einops-style operations provide a powerful and readable way to rearrange, reduce, and repeat tensors. In torch.js, these operations are fully type-safe, meaning the output shape is inferred at compile time and pattern errors are caught before you run your code.

Overview

Einops uses a declarative pattern language to describe how dimensions should be transformed.

import torch, { rearrange, einops_reduce, einops_repeat } from '@torchjsorg/torch.js';

const x = torch.randn(2, 3, 4, 5); // [batch, channels, height, width]

// Flatten spatial dimensions

const flat = rearrange(x, 'b c h w -> b (c h w)'); // Tensor<[2, 60]>

// Average pool over spatial dimensions

const pooled = einops_reduce(x, 'b c h w -> b c', 'mean'); // Tensor<[2, 3]>

// Repeat along a new dimension

const repeated = einops_repeat(x, 'b c h w -> b c h w 3'); // Tensor<[2, 3, 4, 5, 3]>rearrange

The rearrange function is a Swiss Army knife for tensor shapes. it combines reshape, permute, view, squeeze, and unsqueeze into a single readable call.

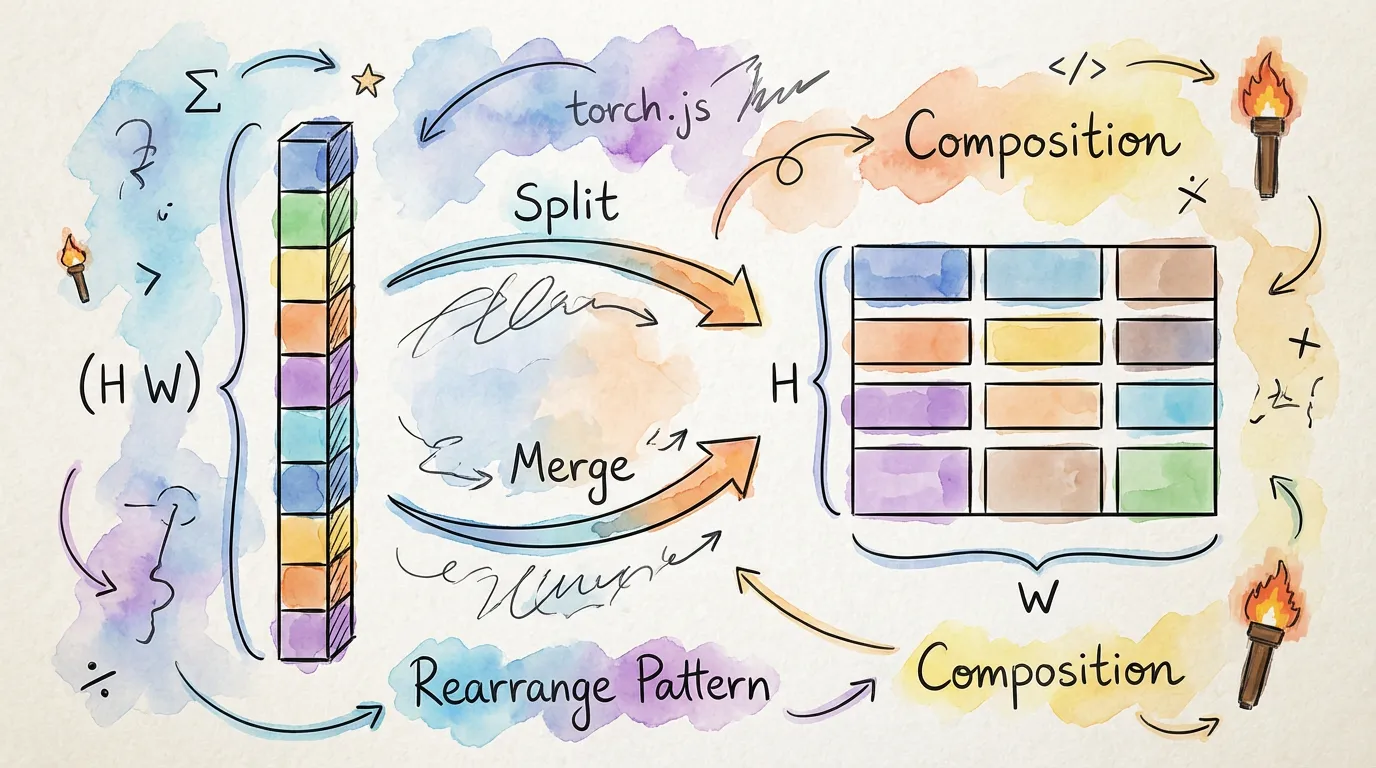

Basic Patterns

const x = torch.randn(2, 3, 4, 5);

// Transpose: swap channels and height

rearrange(x, 'b c h w -> b h c w'); // [2, 4, 3, 5]

// Flatten: combine multiple dimensions

rearrange(x, 'b c h w -> (b c) (h w)'); // [6, 20]

// Split: break one dimension into two (requires axis sizes)

const y = torch.randn(12, 10);

rearrange(y, '(h w) c -> h w c', { h: 3 }); // [3, 4, 10]Axis Inference: Notice in the split example that we only provided h: 3. Einops automatically

calculated that w must be 4 because the original dimension was 12.

reduce (einops_reduce)

Perform mathematical reductions (sum, mean, max) while simultaneously rearranging the tensor.

| Operation | Description |

|---|---|

| sum | Sum of elements |

| mean | Average of elements |

| max | Maximum element |

| min | Minimum element |

const images = torch.randn(32, 3, 224, 224);

// Global average pooling

const gap = einops_reduce(images, 'b c h w -> b c', 'mean'); // [32, 3]

// Max pooling over 2x2 patches

const pooled = einops_reduce(images, 'b c (h p1) (w p2) -> b c h w', 'max', { p1: 2, p2: 2 });

// [32, 3, 112, 112]repeat (einops_repeat)

The einops_repeat function allows you to tile or broadcast data along new or existing dimensions.

const weights = torch.randn(10);

// Broadcast for batch processing

const batched = einops_repeat(weights, 'c -> b c', { b: 32 }); // [32, 10]

// Upsample an image by repetition

const small = torch.randn(8, 8);

const large = einops_repeat(small, 'h w -> (h 4) (w 4)'); // [32, 32]Type Safety & Errors

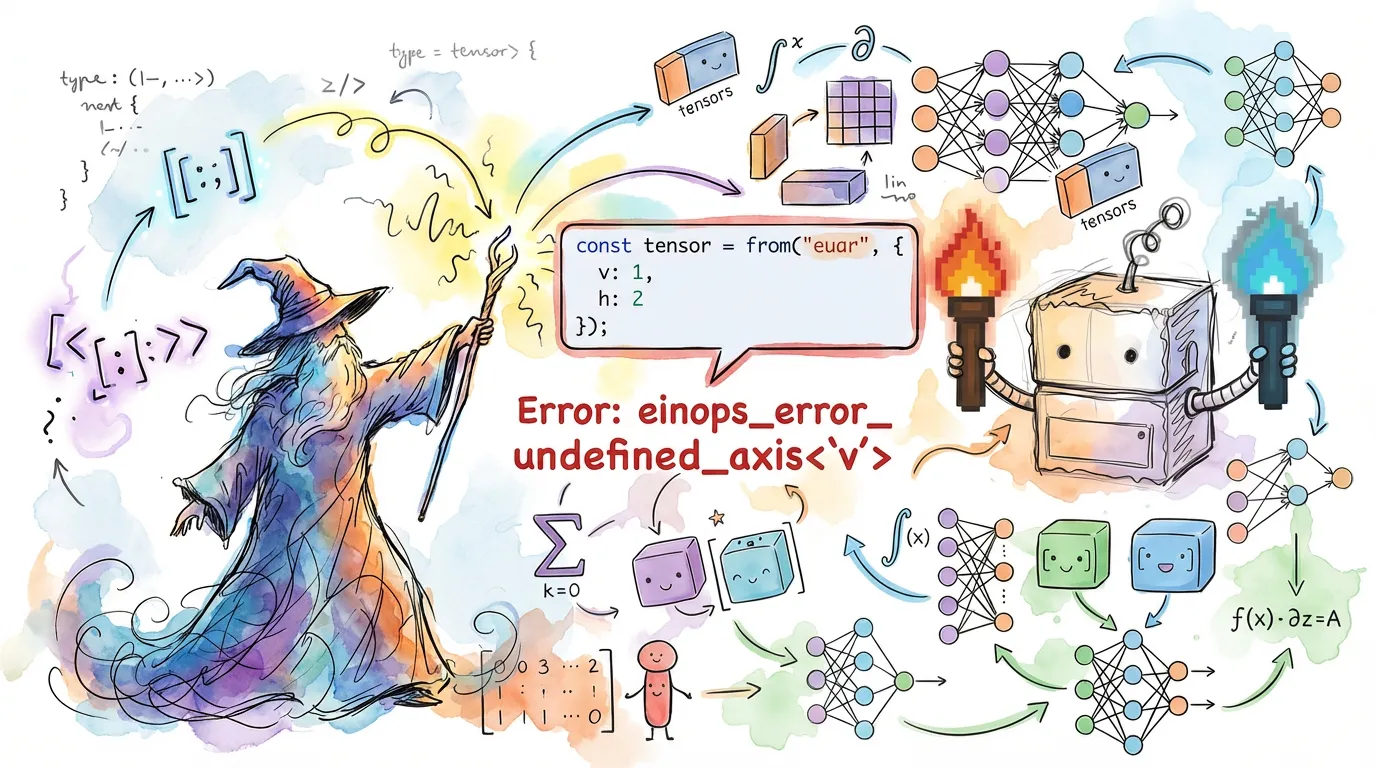

Because torch.js parses these strings at the type level, you get instant feedback if your pattern is invalid.

const x = torch.zeros(2, 3);

// Error: pattern has 3 dims but tensor has 2

rearrange(x, 'a b c -> c b a');

// Error: axis v is not defined in the input

rearrange(x, 'a b -> a v');

Why Use Einops?

- Readability:

b c h w -> b (h w) cis much clearer than a chain of.permute().reshape(). - Maintainability: Named axes make it easy to understand the semantics of a transformation months later.

- Safety: Catching dimension mismatches at compile time prevents hours of debugging runtime shape errors.

Next Steps

- Einsum - Using Einstein notation for complex math.

- Type Safety - How shape generics power these operations.