Einstein Summation

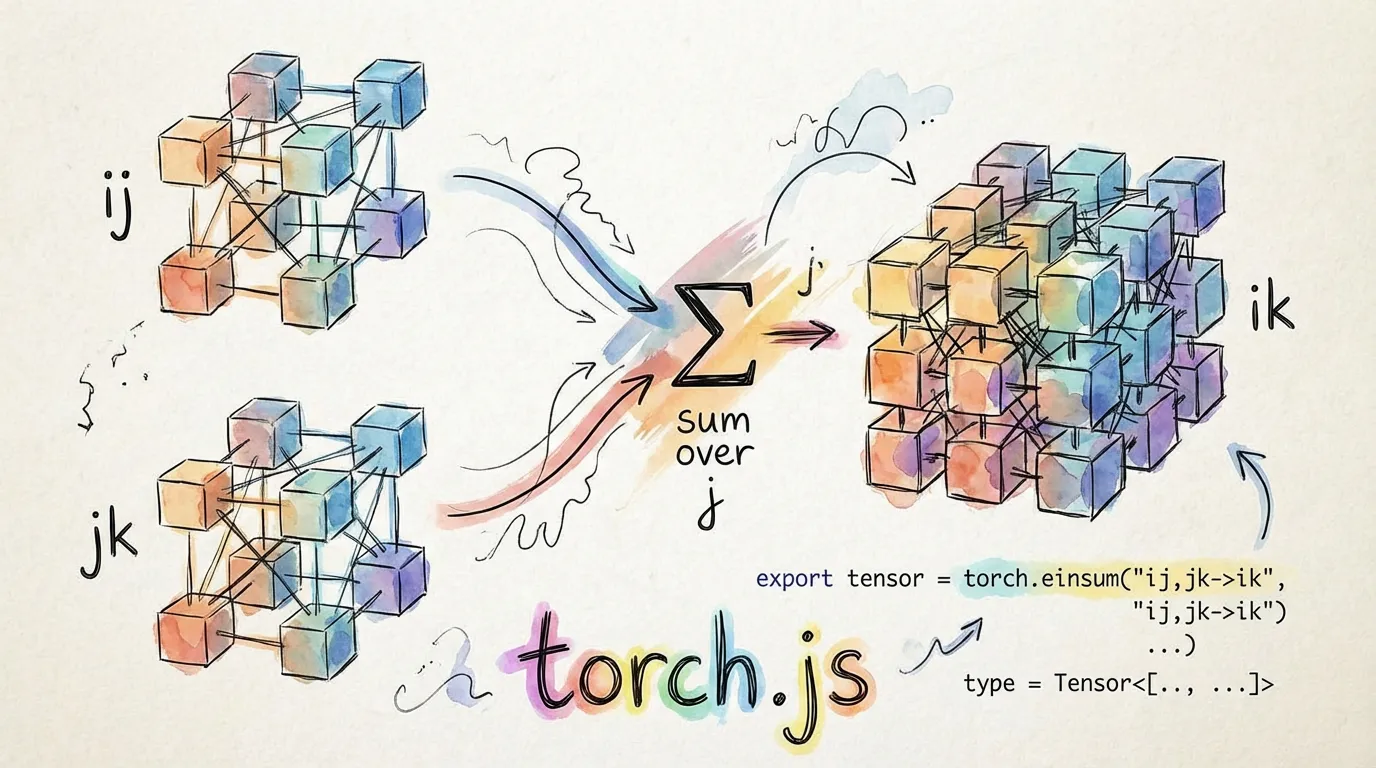

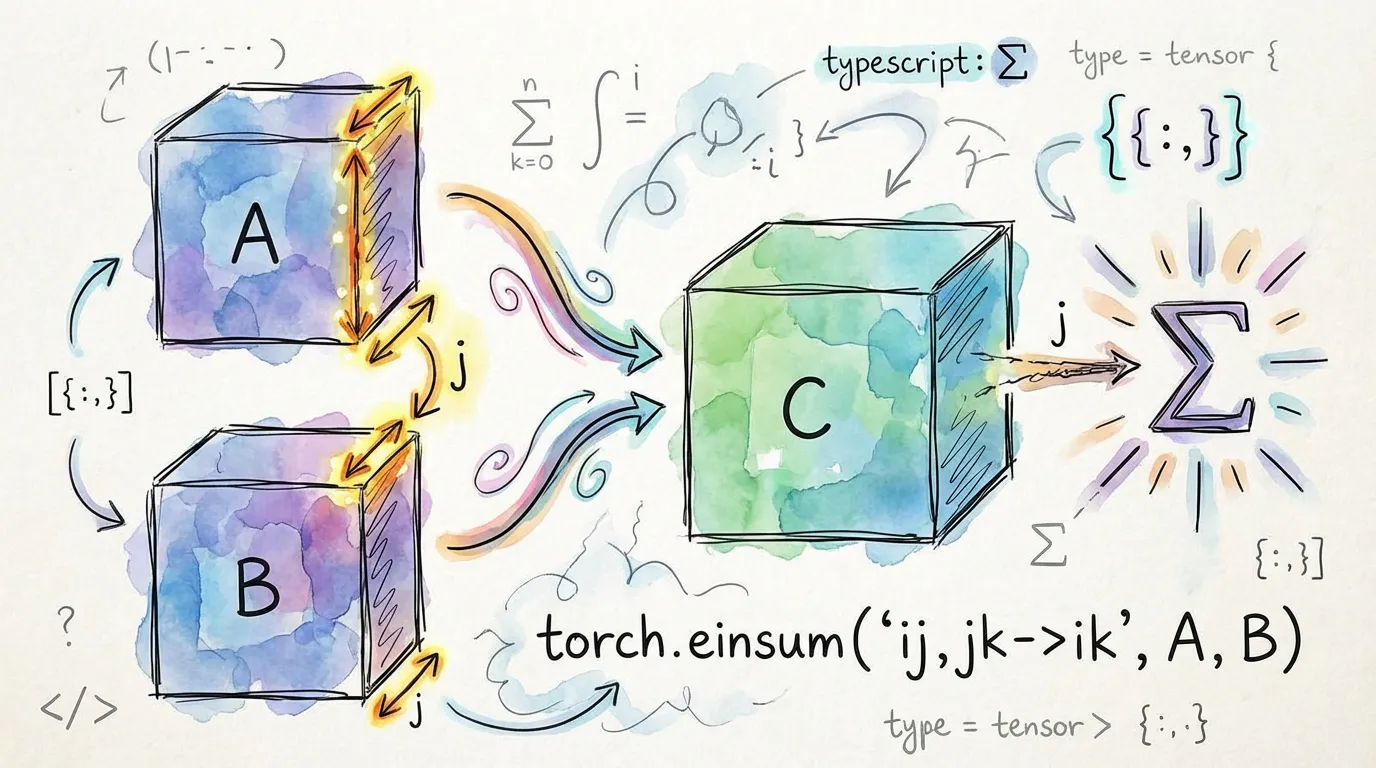

Einstein summation (einsum) is a concise domain-specific language for expressing complex tensor operations like matrix multiplication, dot products, and contractions. In torch.js, einsum is fully type-safe and parses your equations at compile time.

Basic Usage

The syntax follows the format: input_subscripts -> output_subscripts.

import torch from '@torchjsorg/torch.js';

const a = torch.randn(2, 3);

const b = torch.randn(3, 4);

// Matrix multiplication: ij,jk -> ik

const c = torch.einsum('ij,jk->ik', a, b); // Tensor<[2, 4]>

// Dot product: i,i -> (scalar)

const x = torch.randn(5);

const dot = torch.einsum('i,i->', x, x); // Tensor<[]>Common Patterns

The type system automatically recognizes these standard operations and provides precise output shapes.

| Pattern | Operation | Output Shape |

|---|---|---|

| ij,jk->ik | Matrix Multiply | [M, N] |

| bij,bjk->bik | Batch Matmul | [B, M, N] |

| i,j->ij | Outer Product | [M, N] |

| ij->ji | Transpose | [N, M] |

| ii-> | Trace | [] (scalar) |

Ellipsis (...) Support

The ellipsis (...) allows you to perform operations on the trailing dimensions while preserving any number of leading batch dimensions.

// Batch matrix multiply with arbitrary batch dims

const batchA = torch.randn(2, 3, 4, 5); // [batch1, batch2, M, K]

const batchB = torch.randn(2, 3, 5, 6); // [batch1, batch2, K, N]

const res = torch.einsum('...ij,...jk->...ik', batchA, batchB);

// Result: [2, 3, 4, 6]Multi-Operand Contractions

You can contract any number of tensors in a single call. This is often more efficient and readable than chaining multiple matmul calls.

// Three-way matrix multiplication

const result = torch.einsum('ij,jk,kl->il', A, B, C);Under the Hood: torch.js optimizes einsum by mapping recognized patterns directly to

high-performance WebGPU kernels like matmul or bmm.

Type Safety & Validation

Just like our Einops implementation, einsum validates your equations at compile time.

// Error: dimension j has inconsistent sizes (3 vs 5)

torch.einsum('ij,jk->ik', torch.randn(2, 3), torch.randn(5, 4));

// Error: subscript ij has 2 chars but tensor has rank 3

torch.einsum('ij,jk->ik', torch.randn(2, 3, 4), torch.randn(4, 5));Why Use Einsum?

Einsum is often the cleanest way to express operations that involve complex broadcasting or reduction across multiple axes. Instead of mentally tracking dimension indices (0, 1, 2), you use descriptive letters that document the mathematical intent of the operation.

Next Steps

- Tensor Indexing - Slicing tensors with

.at() - Best Practices - Optimizing your WebGPU computation pipeline.